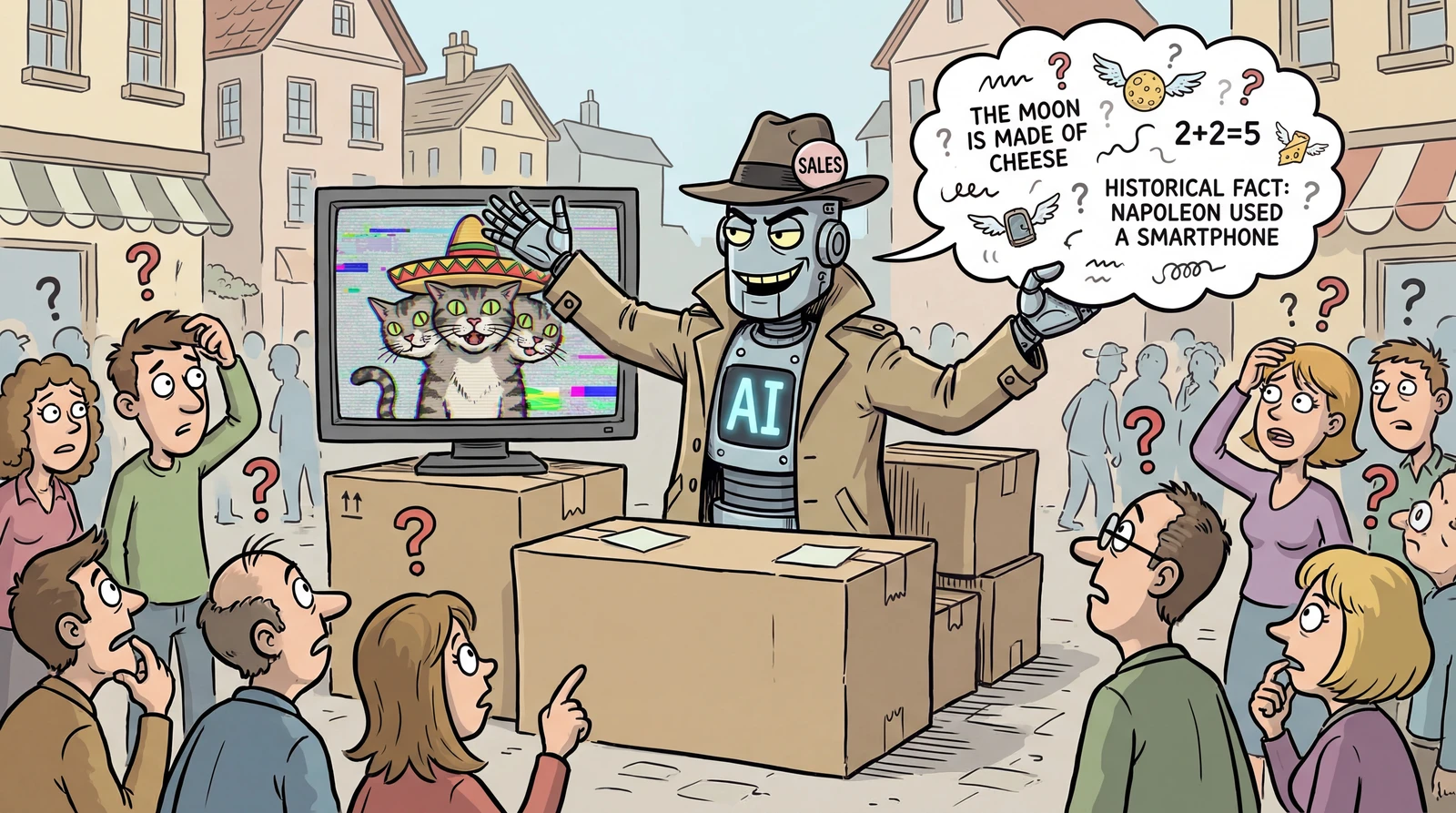

Why Does AI Confidently Lie?

Have you ever asked AI a question, only for it to cite sources that don't exist — or state completely made-up facts with absolute confidence?

That's called AI hallucination.

In this guide, we'll break down what hallucination actually is, why it happens, how far we've come in fixing it as of 2026, and what you can do to protect yourself.

listContentsexpand_more

- 1. What Is Hallucination?

- Common Types of AI Hallucination

- 2. Why Does Hallucination Happen?

- ① AI Has No Concept of Truth

- ② The Training Data Itself Is Flawed

- ③ Vague Questions Invite Made-Up Answers

- ④ AI Was Never Trained to Say “I Don't Know”

- ⑤ Fluent Doesn't Mean Accurate

- ⑥ Longer Conversations Mean More Mistakes

- 3. Hallucination Levels by AI Model in 2026

- 3-1. Vectara Hallucination Leaderboard

- 3-2. Other Evaluation Results

- 4. How to Reduce Hallucination

- ① Be Specific

- ② Feed It Your Own Sources

- ③ Explicitly Ask It to Flag Uncertainty

- ④ Break Big Questions into Smaller Steps

- ⑤ Always Double-Check What Matters

- 5. What's Next?

- 5-1. Real Progress Is Being Made

- 5-2. But “Perfect” Is Still a Long Way Off

- 6. Key Takeaways

- 7. References

1. What Is Hallucination?

The term borrows from the human experience of hallucinating — seeing things that aren't really there.

In the same way, AI sometimes generates information that doesn't exist and presents it as though it were fact.

Common Types of AI Hallucination

-

Making things up: Confidently declaring “This restaurant has two Michelin stars” — when it's never even been listed in the Michelin Guide

-

Mixing up facts: Stating “This film was released in 2022 and won the Academy Award for Best Picture” — when the year, the award, or both are wrong

-

Giving bad recommendations: Suggesting “This medication works great for headaches” — when the drug is actually prescribed for something entirely different

-

Visual glitches: AI-generated images where people have six fingers or text on signs is garbled nonsense

WARNING

In January 2025, a federal court in Minnesota encountered a bizarre case.

An expert report on “The dangers of AI and misinformation” was submitted as evidence — but when the judge looked into it, every single paper cited in the report turned out to be completely fabricated. ChatGPT had invented them all. The court tossed the submission and noted in its ruling:

“The irony.”

This case perfectly illustrates a core problem: AI would rather make up a convincing answer than simply say “I don't know.”

2. Why Does Hallucination Happen?

To understand why AI “lies,” you first need to understand how it actually works.

① AI Has No Concept of Truth

Think about a parrot. It can mimic human speech with perfect pronunciation and natural intonation — but it has no idea what any of the words mean. AI works in a surprisingly similar way.

AI has consumed an enormous volume of text from the internet and learned patterns — essentially, “after these words, these other words usually come next.” So when you ask “What is the capital of South Korea?”, it answers “Seoul” because that's the word that statistically follows “South Korea” and “capital” most often.

This approach is remarkably accurate for well-known facts.

But here's the catch: AI isn't checking whether something is true. It's just predicting what sounds right based on patterns. That's why it can produce answers that are perfectly fluent yet completely wrong — especially on niche topics or complex questions where training data is thin.

② The Training Data Itself Is Flawed

AI learns from text across the internet. But the internet is a mixed bag:

-

There are well-researched news articles — and there are factually wrong blog posts

-

There are expert analyses — and there are baseless reviews and rumors

AI absorbs all of it without telling the difference. Imagine going to a library and reading every book on the shelf — including the ones someone snuck in that are full of nonsense.

③ Vague Questions Invite Made-Up Answers

If you ask “Recommend somewhere good to eat,” AI has no idea where you live, what cuisines you enjoy, or how much you want to spend. So it fills in the blanks with whatever sounds plausible. This is one of the most common triggers for hallucination.

④ AI Was Never Trained to Say “I Don't Know”

During training, AI is rewarded for producing helpful-sounding answers.

The result? It develops a habit of answering even when it shouldn't. Think of it like a student who'd rather guess on an exam than leave a question blank.

⑤ Fluent Doesn't Mean Accurate

AI is trained to produce smooth, confident-sounding prose.

The problem is that it sounds just as confident when it's wrong. We naturally trust people who speak with authority — and AI exploits the same instinct, delivering incorrect information so convincingly that it's easy to take at face value.

⑥ Longer Conversations Mean More Mistakes

Ever notice that AI gets less reliable the longer your conversation goes? That's not a coincidence — it's built into how the technology works.

-

It loses track of earlier context: As the conversation grows, AI's “working memory” fills up and it starts dropping conditions or details you mentioned at the beginning. It's like sitting in a two-hour meeting and forgetting what was decided in the first ten minutes.

-

Small errors compound: If AI makes a minor mistake early on and keeps building on it, the errors snowball with every subsequent response.

-

Attention gets stretched thin: AI has to spread its “attention” across the entire conversation. The longer the thread, the less attention each part gets — which means a higher chance of missing key context and drifting off course.

NOTE

When a conversation starts feeling too long, open a fresh chat and restate the key points from scratch. This simple habit can dramatically cut down on hallucinations.

3. Hallucination Levels by AI Model in 2026

Hallucination rates vary widely depending on how you measure them.

3-1. Vectara Hallucination Leaderboard

This benchmark measures how much content AI fabricates — information not present in the source document — when performing a summarization task.

| AI Model | Hallucination Rate |

|---|---|

| Google / Gemini 2.5 Flash Lite | 3.3% |

| OpenAI / GPT 4.1 | 5.6% |

| xAI / Grok 3 | 5.8% |

| OpenAI / GPT 5.4 | 7% |

| Google / Gemini 2.5 Pro | 7% |

| Google / Gemini 2.5 Flash | 7.8% |

| Google / Gemini 3.1 Flash Lite | 8.2% |

| OpenAI / GPT 4o | 9.6% |

| Anthropic / Claude Sonnet 4 | 10.3% |

| Google / Gemini 3.1 Pro | 10.4% |

3-2. Other Evaluation Results

The Vectara leaderboard focuses on document summarization, which tends to produce lower hallucination rates. In open-ended Q&A — the way most people actually use AI — the picture looks very different.

-

AIMultiple Benchmark: When 37 AI models were tested on 60 questions, even the latest models hallucinated more than 15% of the time. Legal questions saw roughly 18.7% errors, and medical questions around 15.6%.

-

AA-Omniscience Benchmark: On challenging knowledge questions, all but 3 of the models tested generated hallucinations more often than correct answers.

4. How to Reduce Hallucination

You can't eliminate hallucination entirely, but you can cut it down dramatically.

① Be Specific

-

Bad: “Tell me about traveling to Tokyo”

-

Good: “I'm planning a 4-day trip to Tokyo in early April, focused on cherry blossom spots. My budget is around $700 per person. Can you build an itinerary?”

The more detail you provide, the less room AI has to improvise.

② Feed It Your Own Sources

Give AI the raw material to work with. For example:

“Summarize only what's in this article” (then paste the URL or full text)

When AI has a concrete source to draw from, it's far less likely to make things up.

③ Explicitly Ask It to Flag Uncertainty

“If you're not sure about something, mark it as ‘needs verification’ and cite your sources.”

This one sentence makes a surprising difference. It nudges AI toward honesty and sourcing rather than confident guessing.

④ Break Big Questions into Smaller Steps

Dumping a complex, multi-part question on AI all at once is a recipe for errors. Breaking it into steps lets you catch mistakes before they snowball.

⑤ Always Double-Check What Matters

Whether it's a restaurant recommendation, medical information, or a historical claim — if it matters, verify it with a quick search or an official source. Treat AI output as a strong starting point, not the final word.

5. What's Next?

5-1. Real Progress Is Being Made

AI hallucination is measurably decreasing.

-

For grounded tasks like document summarization, hallucination rates have dropped below 1%. When you give AI reference material to work with, fabrication has become nearly a non-issue.

-

Each generation of models gets more accurate. The latest releases from late 2025 and early 2026 — GPT-5.2, Opus 4.5, and others — show clear improvements.

5-2. But “Perfect” Is Still a Long Way Off

Let's be honest: completely eliminating hallucination isn't possible yet.

As long as AI fundamentally works by predicting the next word, occasional mistakes are baked into the technology.

-

Some experts believe that by around 2027, hallucination will reach “a level most people won't need to worry about in everyday use.”

-

Others urge caution, pointing out that “people said the same thing in 2023, and we're still not there.”

The realistic goal isn't zero hallucination — it's good enough hallucination.

Think of AI as a brilliant but imperfect colleague. It will get things wrong sometimes. The key is knowing that — and planning for it.

6. Key Takeaways

-

AI predicts — it doesn't “know”: A confident answer isn't necessarily a correct one

-

Accuracy depends on the task: Summarization errors are under 1%, but open-ended questions still see 15%+ error rates

-

Your habits are your best defense: Be specific, provide sources, and always cross-check

-

AI is a partner, not an oracle: The final call is always yours

7. References

-

Short Circuit Court: AI Hallucinations in Legal Filings and How To Avoid Making Headlines: https://www.coleschotz.com/news-and-publications/short-circuit-court-ai-hallucinations-in-legal-filings-and-how-to-avoid-making-headlines/

-

Vectara Hallucination Leaderboard: huggingface.co/spaces/vectara/leaderboard

-

AIMultiple, AI Hallucination: Compare top LLMs (2026.01): aimultiple.com/ai-hallucination

-

Artificial Analysis, AA-Omniscience Knowledge & Hallucination Benchmark: artificialanalysis.ai/articles/aa-omniscience-knowledge-hallucination-benchmark

-

Suprmind, AI Hallucination Rates & Benchmarks in 2026: suprmind.ai/hub/ai-hallucination-rates-and-benchmarks

-

Suprmind, AI Hallucination Statistics: Research Report 2026: suprmind.ai/hub/insights/ai-hallucination-statistics-research-report-2026